10 essential A/B testing strategies for cold email in 2026

Andrea Lopez

Share

These are the best cold email A/B testing strategies to improve conversion in 2026:

Set the right goal (stop using opens as the main metric)

Test the offer and angle before the subject line

Optimize the first sentence (first 150 characters)

Experiment with different CTAs (calls to action)

Find the ideal length for your audience

Design experiments that isolate true causality

Calculate sample size before you start

Avoid stopping the test when it looks like it is winning

Monitor deliverability during the test

Measure positive replies operationally

A/B testing in cold emails in 2026 is no longer about tweaking the subject line and chasing open rates.

Between privacy changes (which skew opens), stricter spam filters, and the reality of multi channel outreach, real optimization means tracking meaningful outcomes: positive replies, meetings booked, and pipeline conversion, without hurting deliverability.

The difference between a test that drives growth and one that misleads you usually comes down to fundamentals: a clear hypothesis, one primary variable per experiment, true randomization, and non negotiable guardrails ( bounces, spam complaints, unsubscribes, inbox placement ).

Without these, your “winner” may simply be the version that lands in the inbox more often, not the one that persuades better.

In this post you will find 10 essential strategies to run cold email A/B tests properly: prioritize offer and angle over cosmetic tweaks, optimize the first 150 characters, adapt CTAs to problem awareness, calculate sample size, set stop criteria, and most importantly, learn by segment so your insights are portable and not just noise.

10 essential A/B testing strategies for cold email to improve conversion in 2026

1. Define the right objective (forget opens as your primary metric)

Open rate is a weak and increasingly unreliable signal. Apple Mail Privacy Protection preloads content, can inflate opens, and hides real location and timing data. Optimizing only for opens leads to false conclusions.

Better metrics hierarchy:

Primary KPI: positive response rate or meeting booking rate

Secondary: total replies, CTR if using links, conversion rate to demo

Guardrails: bounce rate, spam complaints, unsubscribes, delivery/inbox placement

Gmail recommends keeping spam rate below 0.10% and avoiding reaching 0.30% or higher. In Outlook, complaint rate should stay below 0.3% according to SNDS.

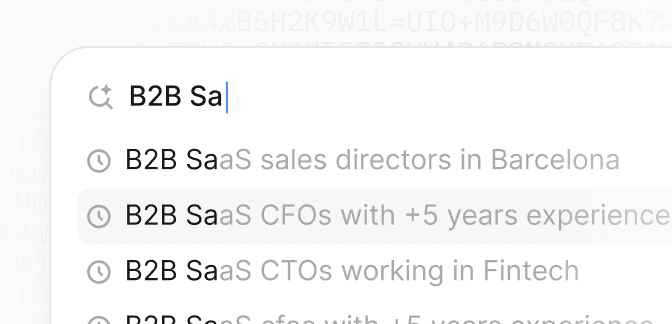

2. Test offer and angle before subject line

The first variable you should test is the one with highest impact on response: your value proposition.

High-impact offer variables:

Main pain point or trigger (cost, risk, time, revenue)

Concrete promise vs generic promise

Friction level: "Does Tuesday at 3pm work?" vs "Who should I reach out to?"

Start by changing the complete angle of your message before obsessing over subject lines. A weak offer won't be saved by a clever subject.

3. Optimize the first line (first 150 characters)

The first 150 characters are what prospects see in the email preview. This is where you decide if they open or not.

Test:

Real personalization (recent event, public signal) vs generic template

Direct question vs short statement

Relevant context vs direct pitch

Genuine personalization based on real research always beats templates with dynamic tokens. "I saw you hired a Head of Sales in December" is not the same as "Hi {{name}}".

4. Experiment with different CTAs (calls to action)

The CTA defines your message's friction. A one-step CTA (directly to meeting) can work with warm audiences, but a two-step CTA usually gets better response in cold email.

Options to test:

1-step CTA: "Does Tuesday or Thursday at 11am work for you?"

2-step CTA: "Does it make sense to talk for 15 minutes?"

Closed vs open CTA

Direct question vs soft proposal

Depending on your ICP and level of problem awareness, the optimal CTA varies radically.

5. Find the ideal length for your audience

There's no "perfect" length for all ICPs. 40-70 words usually works for very busy decision-makers, while 90-130 words may work better for technical buyers who need context.

Test:

Short and direct email vs email with more context

1 idea per email vs 2 ideas (more info, more noise)

Paragraph structure: one long vs several short

General rule: the more senior the role, the shorter the email. But always test with your real audience.

6. Design experiments that isolate real causality

A poorly designed test gives you false winners. To isolate real causality:

Minimum rules:

1 hypothesis, 1 main variable, 1 KPI

Real randomization at lead level

Keep constant: domain, sending pattern, number of follow-ups, segmentation, calendar

Don't mix ICPs: stratify by domain type (gmail/outlook/corporate), country, or vertical

If you test subject AND length AND CTA at once, you won't know what caused the result. One change per test.

7. Calculate sample size before starting

In cold email, rates are low (1%-8%). With low conversions, you need large samples to detect real improvements.

Approximate examples (per variant):

Baseline 4% → 5.5% (+1.5 points): ~3,100 contacts per variant

Baseline 4% → 5% (+1 point): ~6,700 per variant

Baseline 1% → 1.5% (+0.5 points): ~7,700 per variant

If you don't have that volume, don't force significance. Accumulate tests, use weekly cycles, or group learnings by similar segments. Tools like Optimizely or Evan Miller offer sample size calculators for proportion tests.

8. Avoid stopping the test when "it looks like it's winning"

Checking results every hour and stopping when you like the winner inflates false positives. Define stopping criteria before starting.

Common statistical traps:

Stopping when "it looks like it's winning" (sequential sampling problem)

Testing too many variants at once (multiple comparisons)

Solution: define minimum sample or well-planned sequential testing before starting. Respect the criteria even if it's hard.

9. Monitor deliverability during the test

Your tests can "win" for the wrong reasons. If you change things that affect filters (aggressive tracking, links, spammy words), you can modify inbox placement and bias results.

Signals to monitor:

Gmail requires authentication (SPF, DKIM, DMARC) for bulk senders

Outlook tightens criteria for high-volume senders (routed to Junk for non-compliant starting May 2025)

Implement easy unsubscribe and one-click unsubscribe with RFC 8058

Add "stop-loss" guardrails: if bounce or complaints rise above threshold, stop even if KPI goes up.

10. Measure "positive response" operationally

Classify responses automatically but review a sample:

Positive: real interest, asks for info, accepts meeting, refers to decision-maker

Neutral: "not now", "try in Q2", "send me something"

Negative: "no", "not a fit", "unsubscribe"

Noise: OOO, auto-replies, bounces

Optimize for "positives" and "meetings", not total replies. A 15% reply rate means nothing if 12% are "not interested".

Why A/B testing in cold email is different from email marketing

Low volumes and more complex conversions

In traditional email marketing you can run tests with millions of sends and optimize for clicks. In cold email, you work with hundreds or thousands of contacts and optimize for real conversations.

This changes everything:

You need more discipline in experimental design

Sample sizes are smaller, so statistical significance is harder to achieve

You can't afford to "test 10 variants" at once

Deliverability is more fragile

In cold email, your domain reputation builds slowly and breaks quickly. A poorly executed test can burn your domain in days.

Key differences:

In marketing: users subscribed, high engagement, low spam rate

In cold email: unsolicited contact, low engagement, high sensitivity to filters

That's why every test must include deliverability guardrails.

Context matters more than copy

In cold email, timing, ICP, and channel matter as much or more than exact words. A perfect message sent to the wrong person or at the wrong time fails.

This means you must stratify your tests by relevant segments: role, vertical, company size, geography. What works for CTOs in tech startups can completely fail with CFOs in manufacturing.

The biggest mistakes when doing A/B testing in cold email

1. Testing without sufficient volume

The most common mistake: launching a test with 100 emails per variant and declaring a winner with 2 more responses.

With 3-5% conversions, you need thousands of contacts to detect 1-2% improvements. If you don't have that volume, it's better to:

Accumulate learnings over several weeks

Test bigger changes (minimum detectable effect of 3-5%)

Use a qualitative approach and learn from the responses you get

2. Changing multiple variables at once

You test subject, length, CTA and offer simultaneously. The result "wins" but you don't know why.

Consequence: you can't replicate the learning in other campaigns. Knowledge isn't transferable.

Solution: 1 hypothesis, 1 variable, 1 test.

3. Ignoring ICP segmentation

What works for one segment can completely fail in another. A message that resonates with tech startups can sound ridiculous to traditional industrial companies.

Problem: you average results from very different ICPs and draw invalid conclusions.

Solution: stratify by key variables (vertical, size, role, geography) and analyze results by segment.

4. Optimizing for wrong metrics

You optimize for open rate because it's easy to measure. The problem: opens don't translate to meetings.

Metric traps:

Open rate: inflated by Apple Mail Privacy, doesn't predict response

Total reply rate: includes "not interested", OOOs and bounces

CTR: clicks without context don't generate pipeline

Solution: optimize for positive response, useful conversations and scheduled meetings.

How multichannel prospecting affects A/B testing

Email + LinkedIn + calls = complete context

Traditionally sales prospecting is done through isolated channels (email, LinkedIn, phone outreach). This fragments context and makes it difficult to understand which channel or message generated the response.

In multichannel prospecting:

A prospect can see your email, visit your LinkedIn, and respond days later

"Conversion" can come from the combination of touchpoints, not just one

Measuring attribution becomes complex

Implication for A/B testing: if you do multichannel outreach, your email tests must consider the effect of other channels.

Test complete cadences, not isolated messages

Instead of testing just the first email, test the complete cadence:

Email 1 (day 0) + LinkedIn connection (day 2) + Email 2 (day 5) + call (day 7)

vs

Email 1 (day 0) + Email 2 (day 3) + LinkedIn message (day 5) + Email 3 (day 8)

This requires more volume and more robust experimental design, but gives you much more actionable learnings about the complete flow.

Attribution: which touchpoint generated the response

If someone responds after receiving 3 emails and 2 LinkedIn messages, which one generated the conversion?

Possible attribution models:

First-touch: first contact gets credit

Last-touch: last touchpoint before responding

Multi-touch: proportional distribution among all touchpoints

Time-decay: more weight to recent touchpoints

For cold email, the most useful model is usually last-touch or time-decay, because prospects tend to respond after seeing your message several times.

The role of technical infrastructure in A/B testing

Authentication: SPF, DKIM and DMARC

If your authentication isn't properly configured, you can "win" a test simply because one variant delivers better, not because the copy is better.

Minimum requirements:

SPF: authorizes IPs/hosts to send for your domain

DKIM: cryptographic signature that validates the message wasn't modified

DMARC: connects SPF and DKIM with From domain and defines policy

Gmail specifies that for direct mail, the From: domain must align with SPF or DKIM domain to comply with DMARC. Microsoft has similar requirements for high volume senders.

For A/B testing: maintain the same authentication configuration in all variants. If you change sending domains between A and B, you introduce a technical variable that biases results.

Sending domains and warmup

A new domain without reputation will have worse deliverability than an established one. If you test using different domains, you're not testing copy, you're testing reputation.

Rules:

Use the same sending subdomain for all test variants

If you need to scale and add domains, do it after the test, not during

Maintain the same warmup pattern (volume, frequency, engagement) between variants

Tracking pixels and links: how they affect filters

Tracking pixels and URL shorteners can trigger spam filters.

Impact on A/B testing:

If one variant has aggressive tracking and the other doesn't, the deliverability difference can be huge

URL shorteners (bit.ly, ow.ly) are spam signals for many filters

Gmail and Outlook can filter emails with high link ratio

Solution: maintain the same tracking level and link structure in all variants. If you want to test CTAs with links, use the same number of links with similar-length URLs.

Seed testing to measure inbox placement

You can "win" a test because one variant falls less into spam, not because it generates more responses.

Seed testing allows you to measure placement (inbox vs spam vs missing) with control accounts before scaling.

How to apply it:

Send A and B with the same pattern to a seed list (Gmail, Outlook, corporate accounts)

Measure real placement: how many reach inbox, how many to spam

If A "wins" in responses but its placement drops to spam in certain providers, you're buying short-term performance and medium-term degradation

Deliverability tools usually offer seed tests, but you can create your own setup with test accounts.

Legal and compliance considerations in Spain/EU

LSSI and prior consent

In Spain, sending commercial communications by email is conditioned by LSSI (general rule: prior consent; typical exception: prior contractual relationship and similar products/services).

For cold B2B outbound, this creates legal friction. AEPD has reiterated this in resolutions and criteria.

Minimize risk:

Transparency: who you are, why you're contacting

Clear unsubscribe in first email

Opposition and suppression records

Legal review according to country, data type and recipient

GDPR and legal bases for data processing

For personal data processing in direct marketing under GDPR, you must have legal basis, respect reasonable expectations and the right to object.

Most common legal bases in B2B:

Consent: difficult to obtain in cold email

Legitimate interest: possible if prospect is relevant to your business and prospecting is reasonable

Contract execution: only if there's prior relationship

The ePrivacy framework also influences. The combination GDPR + ePrivacy + LSSI makes cold B2B email in Spain complex terrain.

Impact on A/B testing

Problem: if your legal basis is weak or your unsubscribe process isn't clear, you may receive more spam complaints in certain tests, not because of copy but because of perception of intrusion.

Solution:

Always include a clear unsubscribe mechanism (preferably one-click)

Add transparency about who you are and why you're contacting

Maintain updated suppression lists and respect unsubscribes immediately

Consider that some tests may increase legal friction if more aggressive in tone or frequency

Briefing template for each A/B test

Before launching any test, document:

1. Hypothesis: "If we change X, Y will increase because Z"

2. Variable: only one (offer, first line, CTA, length, subject)

3. KPI: positive response / scheduled meeting

4. MDE (minimum detectable effect): minimum improvement worth the effort (example: +1 response point)

5. Minimum sample per variant: calculated with statistical tool

6. Segments: ICP and strata (gmail/outlook/corporate, country, vertical, role)

7. Guardrails: bounce <2%, spam complaints <0.1%, unsubscribes <0.5%

8. Stopping criteria: fixed date or minimum n reached

9. Decision: A wins / B wins / inconclusive (no significance)

10. Documented learning: what you reuse and in which future campaigns

This briefing forces you to think before executing and avoids improvised tests that waste volume.

3 real scenarios where A/B testing drives results

SaaS startup scaling outbound with small team

A startup with 2 SDRs needs to maximize each contact. They can't afford to waste leads with generic messages.

A/B testing use:

They test offer (ROI vs time savings) with 1,500 leads per variant

They discover "time savings" generates +2.3 points of positive response

They apply learning to all future cadences

In 3 months, they double meetings without increasing volume

Key: with low volume, they prioritize high-impact tests (offer, angle) before details (subject, signature).

Industrial company launching new product

An industrial B2B company wants to validate messages for a new product before investing in more SDRs.

A/B testing use:

They test 3 different angles: cost reduction, regulatory compliance, operational efficiency

They segment by vertical: manufacturing vs logistics vs construction

They discover regulatory compliance works better in construction, but cost reduction wins in manufacturing

Result: they personalize messages by vertical and improve meeting rate +40% vs generic message.

Key: ICP segmentation and specific learnings by vertical.

Growth agency testing multichannel cadences

An agency manages outbound for multiple clients and wants to optimize complete cadences.

A/B testing use:

Cadence A: Email (day 0) → LinkedIn (day 2) → Email (day 5) → Call (day 8)

Cadence B: Email (day 0) → Email (day 3) → LinkedIn (day 5) → Email (day 7)

They measure attribution by last-touch and time-decay

Result: Cadence A generates more early responses, but Cadence B has better final meeting rate (+15%).

Key: test complete flows, not isolated messages.

Why Enginy AI facilitates A/B testing in cold email without sacrificing deliverability

Doing rigorous A/B testing in cold email requires infrastructure, clean data, and consistent execution. This is where many companies get stuck: they want to test but don't have the necessary setup.

Enriched data and precise segmentation

Enginy aggregates data from 30+ sources and uses waterfall enrichment with multiple providers, going beyond typical lead mining software. This gives you:

Complete coverage: valid emails, updated roles, intent signals

Precise segmentation: you can stratify tests by vertical, size, role, geography

Data hygiene: reduce bounces and improve deliverability from the start

When your data is clean and complete, your tests are more reliable because you eliminate noise caused by invalid emails or incorrect roles.

Multichannel execution with consistency

Traditionally sales prospecting is done through isolated channels (email, LinkedIn, phone…). With Enginy, a modern b2b prospecting tool, you can integrate all prospecting into a single automated flow, with centralized data to make smarter decisions.

This facilitates A/B testing of complete cadences:

Email + LinkedIn + follow-up in a single flow

Clear attribution of which touchpoint generated the response

Consistency in timing and sequence between variants

When all channels are connected, you can test complete strategies instead of isolated messages.

Controlled deliverability infrastructure

Enginy allows you to maintain technical guardrails during tests:

Same authentication (SPF, DKIM, DMARC) in all variants

Monitoring of bounces, spam complaints and placement

Controlled warmup and consistent volume

This ensures your "winners" really are better in copy, not simply better delivered by different technical configuration.

CRM integration to measure real conversion

Through robust CRM integration, Enginy integrates easily with existing CRMs (HubSpot, Salesforce, Pipedrive), without needing to replace them. This allows you to:

Measure end-to-end conversion: from email to meeting and opportunity

Calculate real ROI of each variant

Close the loop between outreach and pipeline

Without CRM integration, you can only measure responses. With integration, you measure business impact.

Productivity: do more tests without more resources

Enginy AI allows sales teams to be much more productive, automating repetitive tasks and saving hours of work, and it complements your existing AI sales tools.

Instead of manually configuring each test, segmenting lists, launching cadences and consolidating results in spreadsheets, you can:

Configure tests in minutes

Execute variants with automatic consistency

Get segmented result reports by ICP

This means you can do more tests with less effort and learn faster.

Frequently Asked Questions (FAQs)

What is A/B testing in cold email?

A/B testing in cold email is an experimental method where you send two versions of a message (A and B) to random groups of prospects to determine which generates better results. Unlike email marketing, in cold email you optimize for positive responses and meetings, not clicks.

How many contacts do I need for a valid A/B test?

It depends on your baseline (current conversion rate) and the minimum improvement you want to detect. As reference:

To go from 4% to 5.5% positive response: ~3,100 contacts per variant

To go from 4% to 5%: ~6,700 per variant

To go from 1% to 1.5%: ~7,700 per variant

Without sufficient volume, it's better to accumulate learnings over several weeks or test bigger changes.

What should I test first in cold email?

Prioritize variables with highest impact on response:

Offer and angle: main pain point, promise, friction level

First line (first 150 characters): personalization vs template

CTA: 1 step vs 2 steps, closed vs open

Length and structure: short vs additional context

Leave subjects and signatures for later. The offer matters 10x more than the subject.

Why isn't open rate a good metric?

Apple Mail Privacy Protection preloads content and can artificially inflate opens. Plus, opens don't predict positive responses: someone can open out of curiosity and never respond.

Better metrics:

Positive response rate (real interest, accept meeting)

Scheduled meeting rate

Conversion rate to opportunity in CRM

Optimize for useful conversations, not curiosity.

How do I avoid burning my domain during tests?

Deliverability guardrails:

Keep spam complaints <0.1% (Gmail recommends <0.10%)

Keep bounce rate <2%

Implement complete authentication: SPF, DKIM, DMARC

Use one-click unsubscribe (RFC 8058)

Don't change technical infrastructure between variants

If a variant increases complaints or bounces, stop immediately even if KPI goes up.

Can I test multiple variables at once?

Technically yes (factorial design), but not recommended for most teams. Testing multiple variables requires:

Much more sample (4x for a 2x2 factorial)

More complex statistical analysis

Risk of interactions that confuse results

Better approach: 1 hypothesis, 1 variable, 1 test. Learn, apply, repeat.

How do I measure if a response is "positive"?

Manually classify a sample then automate:

Positive: "I'm interested", "let's talk", "tell me more", refers to decision-maker, accepts meeting

Neutral: "not now", "try in Q2", "send me info"

Negative: "not interested", "not a fit", "unsubscribe"

Noise: OOO, auto-replies, bounces

Only optimize for positives. A 15% reply rate doesn't help if 12% are "no thanks".

How long should an A/B test last?

Depends on your sending volume and response cycle:

Minimum: time to reach calculated minimum sample

Recommended: 2-3 weeks to capture late responses and follow-up effects

Maximum: 1 month (after that, external factors can contaminate)

Rule: define stopping criteria before starting and respect it, even if "it looks like it's winning" earlier.

How does A/B testing work if I do multichannel outreach?

In multichannel prospecting (email + LinkedIn + calls), A/B testing gets complicated because conversion can come from the combination of touchpoints.

Best approach:

Test complete cadences, not isolated messages

Use last-touch or time-decay attribution to assign credit

Keep all channels constant except the variable you're testing

If you change email and LinkedIn at once, you won't know which generated the result.

Can Enginy help me with A/B testing in cold email?

Yes. Enginy facilitates rigorous A/B testing by providing:

Enriched data from 30+ sources for precise segmentation

Consistent multichannel execution (email + LinkedIn) in a single flow

Controlled infrastructure to maintain stable deliverability

CRM integration to measure real conversion (meetings, opportunities)

Automation that lets you do more tests without more resources

This allows you to test complete strategies rigorously, not just change subjects.